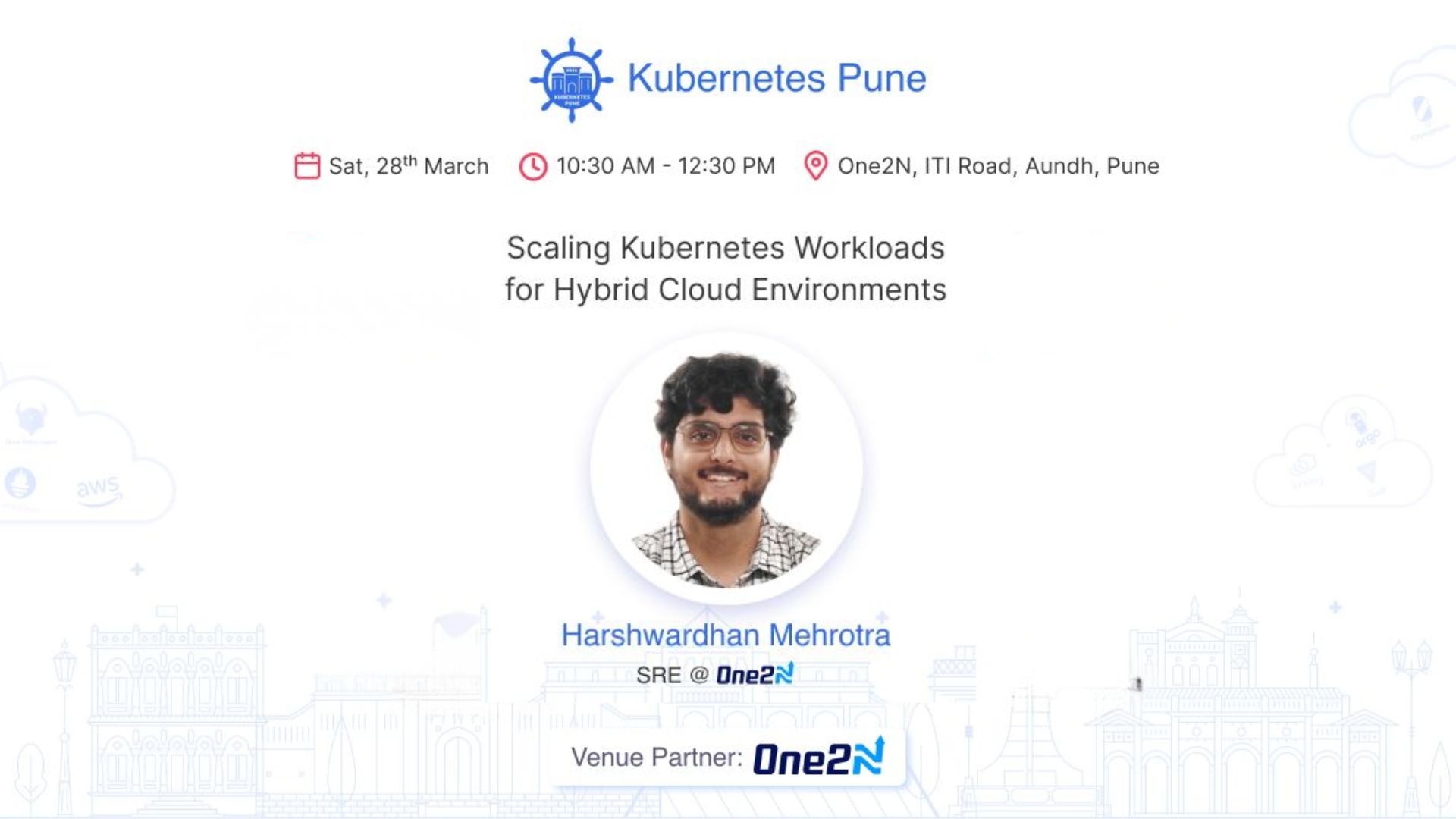

ABC of LLMOps - What does it take to run self-hosted LLMs|Rootconf mini 2024

Feb 5, 2025

Running Self-Hosted LLMs in Production: An SRE’s Experiment

Lessons from deploying open-source models on Kubernetes GPUs, managing vector DBs & building RAG apps

🧠 Why We Did This

Most companies today rely on OpenAI APIs for GenAI workflows but what happens when you need control over data privacy, costs, or custom models? As SREs managing backend systems at scale, we wanted answers to:

“How do you run LLMOps pipelines without depending on OpenAI?”

“What infrastructure gaps emerge when moving from prototypes to production?”

“Is owning GPU hardware better than cloud for steady-state workloads?”

This talk documents our 6-month experiment to learn LLMOps from first principles while building internal tools like a resume-filtering RAG app.

The Experiment

Phase 1: Learning First Principles

Models: Started with lightweight models (Phi3) → progressed to Llama3 & Mistral for complex tasks.

Toolchain: Tested LangChain → hit limitations → migrated core logic to LlamaIndex for production needs.

Vector DBs: Ran QDrant locally → stress-tested embedding storage/retrieval latency at scale.

Phase 2: Building Infrastructure Muscle

GPUs on Kubernetes: Deployed Ray/KubeRay clusters → optimized GPU utilization vs cost tradeoffs.

Observability: Added metrics for prompt latency, token usage & DB query performance early (critical!).

Phase 3: Shipping Real Workflows

Built a resume-filtering RAG app (dogfooded internally).

Lessons learned: Prompt engineering ≠ one-time effort; versioning embeddings matters; cold starts hurt UX.

Key Takeaways

Start Small but Think Production

Toy apps → internal tools → customer-facing pipelines requires rethinking infra (e.g., scaling vector DBs).Own Your Stack If…

Compliance/data privacy is non-negotiable

Steady-state inference demand justifies GPU capex

Avoid Framework Lock-In

LangChain is great for prototyping but frameworks like LlamaIndex offer better control for SREs managing uptime/SLAs.

Who Should Care?

This talk isn’t about AI theory it’s a playbook for engineers tasked with operationalizing LLMs:

SREs/DevOps teams planning GPU clusters or hybrid cloud AI infra

Engineers struggling with OpenAI API costs/limitations

Teams building RAG apps that need vector DB + model tuning expertise